9. A simple Rescale simulation example#

Load tutorial into dapta app.

View files on Github.

Duration: 15 min

Rescale is a cloud-based platform for computational engineering and R&D that offers access to many commercial simulation software tools. This includes FEA and CFD solvers that are commonly used in industry.

In this example we demonstrate how to setup and execute a simple Rescale simulation from within a dapta analysis workflow.

The following sections will guide you through the creation of the simulation workflow starting from an empty workspace. Already signed-up? Access your workspace here.

9.1. Prerequisites#

The tutorial runs a python script in miniconda via Rescale resources. You will need a Rescale account to execute the analysis. No other software licenses are required.

Although the Rescale job executes within a few seconds on a small compute node, be aware that it will use a very small amount of credit from your Rescale account.

Before you start, setup a dedicated Rescale API key following the instructions from the advanced user guide: Using Rescale’s REST API. This API key is required to authenticate your account when interacting with the Rescale API via the dapta web app.

9.2. Create the component#

Navigate to your dapta dashboard and create a blank workspace by selecting New (if required).

Right-click in the workspace and select Add Empty Node.

This creates an empty template component in your workspace.

Select the component to edit it:

In the

Propertiestab, fill in the component name,rescale-comp, and select the component APIrescale-comp:latest.Copy the contents of the

setup.py,compute.py,paraboloid.pyfiles from below into a text editor, save them locally. Then upload the first 2 files under thePropertiestab and upload theparaboloid.pyfile under theParameterstab by selectingupload user input files.Also in the

Propertiestab check the box next to theStart NodeandEnd Nodeoptions.Insert the following “job” JSON object into the

Parameterstab text box (below the “user_input_files” entry).

{

"job": {

"API_token_name": "rescale",

"analysis": {

"code": "miniconda",

"version": "4.8.4"

},

"archiveFilters": [

{

"selector": "*"

}

],

"command": "python paraboloid.py",

"hardware": {

"coreType": "emerald",

"coresPerSlot": 1,

"slots": 1,

"walltime": 1

},

"name": "test",

"envVars": {}

}

}

Select

Save datato save and close the component.

The “job” parameter contains the standard Rescale job information:

“analysis”: a description of the analysis software by Rescale code and version number;

“hardware”: a description of the Rescale execution nodes you want to use;

“name”: a customizable job name;

“command”: a string of commands that should be executed to launch the analysis;

“archiveFilters”: contains a list of selectors that are used to filter the analysis output files returned to the dapta interface - here we use a wildcard

"*"to recover all analysis output files.

The value of “API_token_name” refers to the name of a dapta user secret as explained in the next section.

from datetime import datetime

def setup(

inputs: dict = {"design": {}, "implicit": {}, "setup": {}},

outputs: dict = {"design": {}, "implicit": {}, "setup": {}},

parameters: dict = {

"user_input_files": [],

"inputs_folder_path": "",

"outputs_folder_path": "",

},

) -> dict:

"""A user editable setup function."""

# initalise setup_data keys

response = {}

message = f"{datetime.now().strftime('%Y%m%d-%H%M%S')}: Setup completed."

print(message)

response["message"] = message

return response

from datetime import datetime

from pathlib import Path

import rescale

def compute(

inputs: dict = {"design": {}, "implicit": {}, "setup": {}},

outputs: dict = {"design": {}, "implicit": {}, "setup": {}},

partials: dict = {},

options: dict = {},

parameters: dict = {

"user_input_files": [],

"inputs_folder_path": "",

"outputs_folder_path": "",

},

) -> dict:

"""A user editable compute function."""

print("Starting user function evaluation.")

inputs_folder = Path(parameters["inputs_folder_path"])

run_folder = Path(parameters["outputs_folder_path"])

inputs_paths = []

for file in parameters["user_input_files"]:

src = inputs_folder / file["filename"]

inputs_paths.append(src)

# define rescale job parameters

job = parameters["job"]

for key in ["coresPerSlot", "slots", "walltime"]:

job["hardware"][key] = int(job["hardware"][key])

# launch rescale job

rescale.main(job, inputs_paths, run_folder)

message = f"{datetime.now().strftime('%Y%m%d-%H%M%S')}: Rescale job completed."

print(message)

return {"message": message}

from datetime import datetime

def f_xy(x, y):

out = {}

out["f_xy"] = (x - 3.0) ** 2 + x * y + (y + 4.0) ** 2 - 3.0

message = f"{datetime.now().strftime('%Y%m%d-%H%M%S')}: Compute paraboloid f(x:{str(x)},y:{str(y)}) = {str(out['f_xy'])}."

out["message"] = message

return out

if __name__ == "__main__":

out = f_xy(5.0,5.0)

print(out["message"])

9.3. Setup a user secret#

User Secrets are a secure way of saving third-party software/API tokens to your dapta user account.

In this section we create a User Secret in the dapta web app to save the Rescale API token:

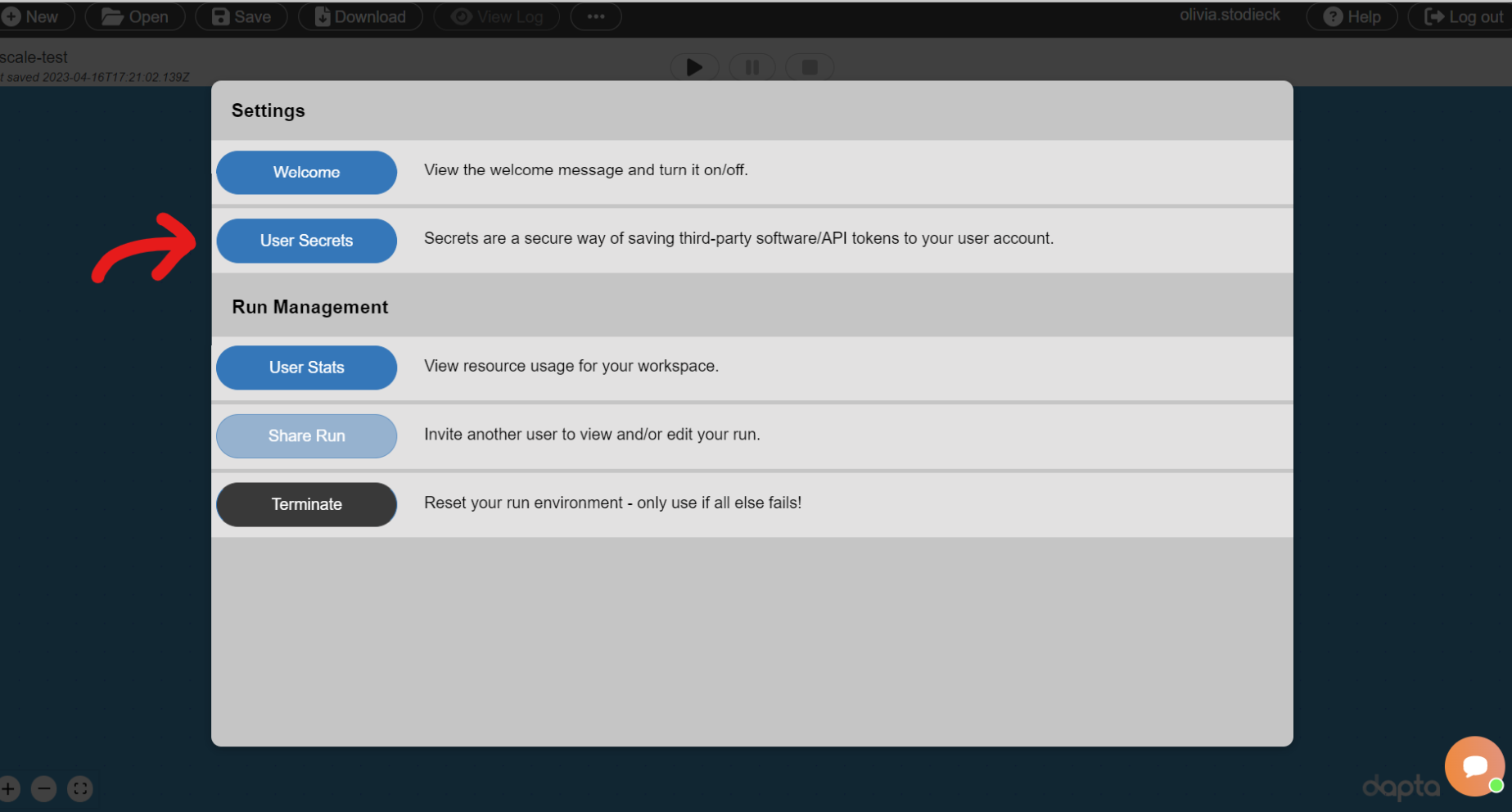

In the dapta interface controls, select the “…” button and then “User Secrets” as shown below.

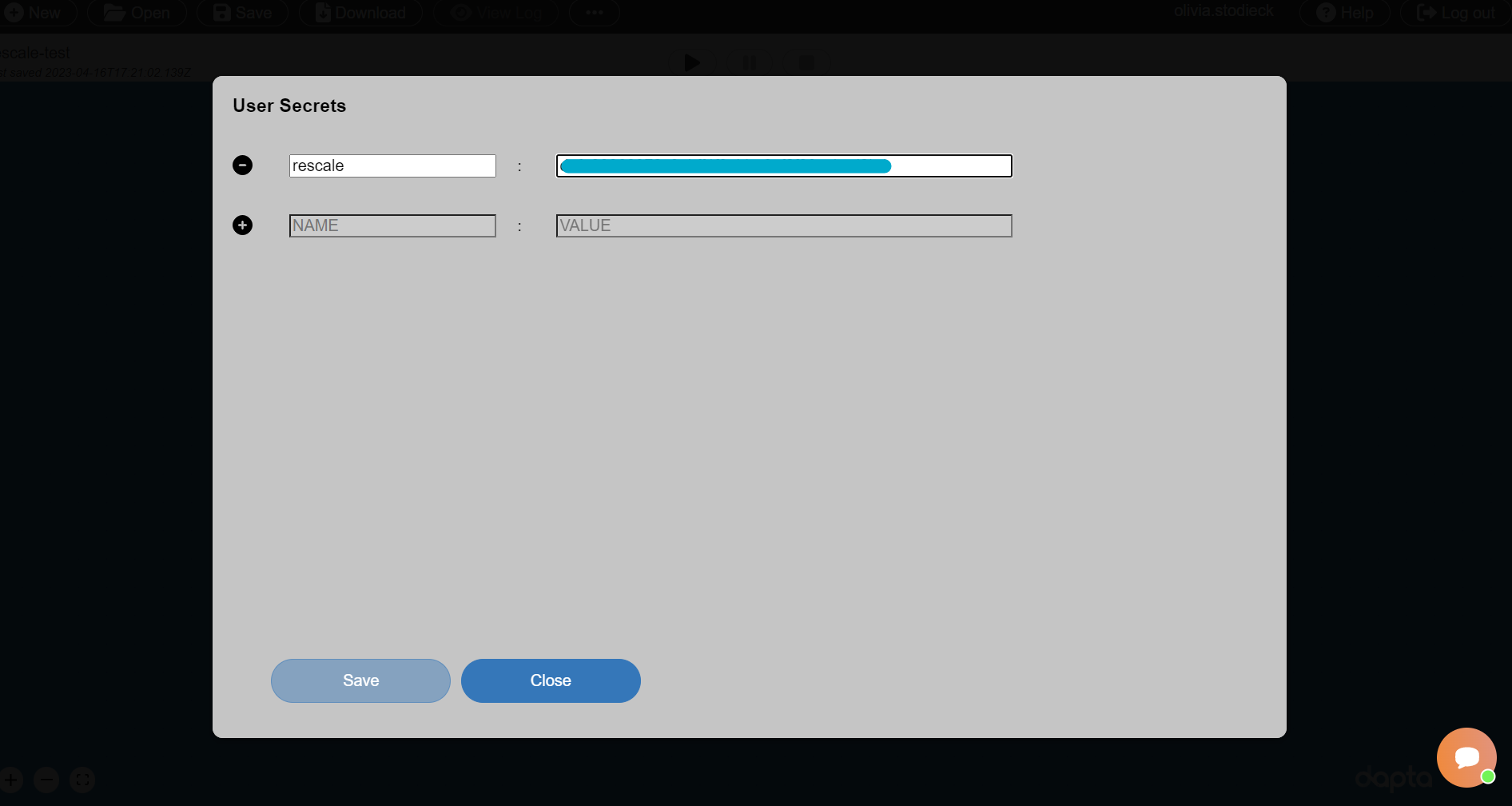

Select the “+” icon to add user secret, which consists of a NAME and VALUE pair.

Enter “rescale” in the NAME box and the value of your Rescale API Token in the VALUE box as shown below.

Select

Saveto save the new User Secret, then selectClose.

The rescale component created in the previous section can now access the token value by reference to the user secret name.

9.4. Execute the workflow#

We can now execute the Run by selecting the play symbol ▶ in the Run controls interface. Once the run has started, the component will setup and then execute. The Run should complete within 5-10 min.

Note

When the dapta component is executing you can also view the job status in the Rescale web interface. However, do not edit the job or the files manually in Rescale, as this can cause dapta to crash.

9.5. Inspect the outputs#

Select View Log in the interface controls to view a summary of the Run as a nested JSON text object.

Select the rescale-comp component in the workspace to open it and then navigate to the Log tab.

The Log tab includes a download files snapshot link. Select this to download a zip file that contains all input and output files as they currently exist in your workspace for this component.

The “ouputs” folder includes all analysis output files (here the unmodified paraboloid.py only), as well as the following two files:

input_files.zip : an compressed archive that is uploaded to Rescale and contains all input files (here paraboloid.py only);

process_output.log : a log file that contains a list of time-stamped events and messages as shown below.

[2023-04-12T13:48:40Z]: Launching python paraboloid.py, Working dir: /enc/udeprod_gmhfPb/work/shared. Process output follows:

[2023-04-12T13:48:40Z]: 20230412-134840: Compute paraboloid f(x:5.0,y:5.0) = 107.0.

[2023-04-12T13:48:40Z]: Exited with code 0

9.6. Clean-up#

Delete your session by selecting New in the interface.

It may take a minute or so for the Cloud session to be reset.